What Is A Qubit

The real story of what is a qubit is far weirder, older, and more consequential than the version most people know.

At a Glance

- Subject: What Is A Qubit

- Subject: What Is A Qubit

- First Emerged: 1981–1995 (conceptualization to experiment)

- Core Idea: A quantum bit that can inhabit multiple states simultaneously and be entangled with others

- Impact: Fuels quantum computation, cryptography, and fundamental physics debates

- Notable Pros/Cons: Exponentially powerful for certain tasks; extremely delicate to noise

At a Glance

The Shortcut That Obscures the Truth

Ask a casual coder what a qubit is, and you’ll likely hear: “It’s like a bit, but it can be 0 and 1 at the same time.” That sentence sells tickets but misses the thrill. A qubit is not merely a two-valued switch; it’s a whisper of probability, a stitch in the fabric where measurement collapses possibility into fact. In the lab, a qubit is a physical system — an electron’s spin, a superconducting circuit, a trapped ion — that faithfully carries a quantum state described by amplitudes, not a stubborn binary yes or no.

Wait, really: a qubit can exist in a continuum of states between 0 and 1? In the mathematics, yes. In practice, we discretize to practical superpositions such as α|0> + β|1>, with |α|^2 + |β|^2 = 1. But the moment you peek, the wavefunction collapses into a single outcome or a probabilistic distribution that you infer across many repeats. That tension — between nagging determinism and stubborn randomness — is where the qubit earns its mystique.

From Thought Experiments to Cold, Real Hardware

The modern qubit lineage begins in the 1980s with thinkers who asked: can quantum states be used to perform computation more efficiently than classical machines? The pivotal moment came when physicist Richard Feynman and Paul Benioff challenged the limits of simulating quantum systems with classical computers. Their question wasn’t theoretical whimsy; it was a dare to build machines that could manipulate superpositions purposefully.

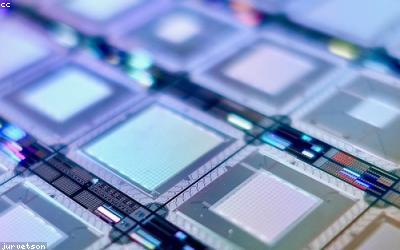

The first practical qubits arrived in the 1990s and 2000s — naked superconducting loops cooled to fractions of a kelvin, trapped ions suspended in radiofrequency fields, and photons guided through integrated circuits. Each platform has its own quirks: superconducting qubits demand cryogenic depths, ion-trap qubits require near-perfection vacuum, and photonic qubits insist on precision optics. Yet all share the same north star: control and readout of quantum states with fidelity high enough to perform computations that outpace classical rivals for specific tasks.

Superposition, Entanglement, and Interference

Superposition is the party trick of the qubit: a single carrier carrying both 0 and 1 until a measurement decides. But the real fireworks appear when qubits pair up and become entangled. Entanglement is not mystical magic; it’s a precise correlation that defies classical intuition. Two qubits can share a state such that measuring one instantly informs you about the other, regardless of distance. This “spooky action,” as Einstein called it, is not superstition — it’s a predictable resource that enables quantum teleportation, superdense coding, and quantum error correction.

"If you really want to understand quantum computing, learn how entanglement creates correlations that no classical system can reproduce." — Dr. Amina Khatri, 2022

Interference acts like the DJ at the quantum party. By arranging the phases of probability amplitudes, we can amplify desirable outcomes and cancel the rest. This is how a small quantum circuit can produce a result that would take a classical computer ages to simulate. The choreography is delicate: a single stray photon, a tiny thermal wobble, and the computation collapses into noise.

Quantum Gates: The Alphabet of a Qubit World

Gates are the quantum operators that transform qubits, the letters we use to spell quantum algorithms. Single-qubit gates like the Hadamard create superpositions, Pauli-X flips mimic classical NOT, and phase gates bend the relative phase between basis states. Two-qubit gates like the CNOT weave entanglement into the fabric of the computation. A sequence of gates — often dozens to hundreds in a modern recipe — maps an initial |0>^⊗n state to a final state from which measurement yields a probabilistic answer that, when repeated, reveals a pattern that classical computers cannot reproduce efficiently.

Inside the lab, a typical experiment might implement a 7-qubit circuit to factor a tiny number or simulate a molecular Hamiltonian. The record books shout: the best run yields a high success probability after sophisticated error mitigation, not perfect error correction — yet. The gap between laboratory success and scalable, fault-tolerant quantum computers remains the frontier that keeps theorists and engineers up late at night.

Error, Decoherence, and the Race to Robust Qubits

No story about qubits is complete without the villains: noise and decoherence. Decoherence is the gradual erosion of quantum states as the environment leaks information. Every qubit is a fragile snowflake in a storm. The race has produced two rival strategies: hardware-based error suppression through fault-tolerant design, and error-correcting codes that hide logical qubits inside a chorus line of physical qubits. The surface code, a star of the field, promises a practical path to scalable quantum computation once the qubit counts reach the billions. Until then, the qubit remains a delicate, shimmering thing, requiring cryogenic purgatories and ultra-clean control lines to stay awake long enough to matter.

"We’re not just building qubits; we’re building a new economic order for information processing." — industry insider, 2023

Practical Applications That Make People Lean In

What is a qubit good for today? Not global supremacy for every task, but pockets of advantage. Quantum chemistry simulations reveal how electrons dance around complex molecules with a precision classical methods struggle to match. Optimization problems — logistics, portfolio optimization, traffic routing — benefit from quantum-inspired heuristics and, in the near term, hybrid quantum-classical workflows. Cryptography is another arena; while quantum computers threaten RSA and ECC, quantum-safe protocols and post-quantum cryptography are racing in response.

In medicine, simulating reaction pathways could unlock cheaper, faster drug discovery. In materials science, predicting superconductivity at higher temperatures may emerge from qubit-powered simulations. Every breakthrough invites a new wait-for-it moment: wait, really, could this lead to room-temperature superconductors or unimaginable batteries? The answer creeps forward as qubit counts grow and control improves.

Ethics, Access, and the Global Quantum Cold War

The qubit revolution isn’t only about physics; it’s geopolitics. Nations race to deploy quantum-secure networks, while startups race to sell “quantum advantage” as a service. There’s a quiet, almost ceremonial competition around talent pipelines: universities, national labs, and private labs jockey for the next wave of physicists who will write the blueprints for the quantum century. The ethics of who controls access to quantum computational power — how it influences surveillance, finance, and security — are as real as the physics itself.

A Glimpse Into the Future: The Day The Qubit Leaves the Lab

Imagine a world where a few hundred qubits become as common as laptops. You’d carry a device that simulates entire proteins during a flight, runs optimized routing for a city in real time, and serenely handles encryption with unbreakable keys. The day it becomes practical hinges on error correction becoming affordable in scale, and on hardware platforms becoming as robust as classical chips. Some technologists predict a practical, fault-tolerant machine within the 2030s; others push that date to the late 2030s. Either way, the qubit isn’t a curiosity; it’s a seed that might germinate the information age into something unrecognizable.

Comments