Uncovering And Addressing Bias In Ai Systems

The deeper you look into uncovering and addressing bias in ai systems, the stranger and more fascinating it becomes.

At a Glance

- Subject: Uncovering And Addressing Bias In Ai Systems

- Category: Artificial Intelligence, Ethics, Machine Learning

When we think of artificial intelligence, we often imagine a coldly logical, impartial decision-making process untouched by the biases and prejudices that plague human beings. But the reality is far more complex – and in many ways, more troubling. As AI systems have become increasingly integral to our daily lives, from hiring decisions to criminal sentencing to content curation, researchers have uncovered a troubling truth: these "objective" algorithms can perpetuate and amplify the very biases they were designed to avoid.

The Pervasive Problem of Algorithmic Bias

At the heart of the issue is the fact that AI systems are built by humans, who inevitably bring their own biases and perspectives to the table. The training data used to develop these algorithms often reflects the systemic inequalities present in society, encoding prejudices around race, gender, socioeconomic status, and more. And even when AI engineers attempt to create "fair" and "unbiased" models, the complexity of the task can lead to unintended consequences.

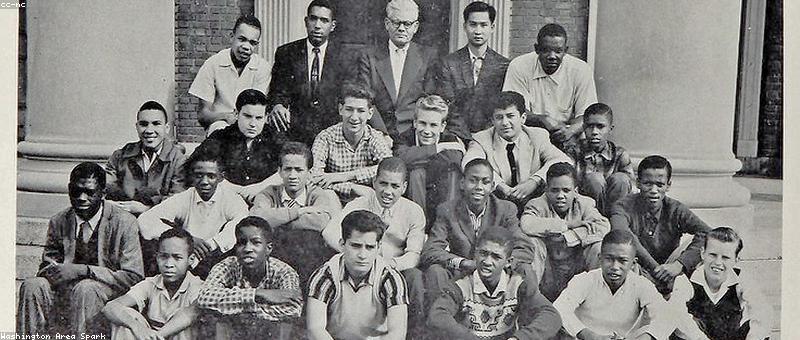

One infamous example is the case of COMPAS, a risk assessment algorithm used in US courtrooms to predict the likelihood of a defendant committing future crimes. Investigative reporting revealed that the algorithm was much more likely to label Black defendants as high-risk, even when controlling for criminal history and other factors. This has led to real-world impacts, with COMPAS-based decisions contributing to the disproportionate incarceration of people of color.

As AI systems become more ubiquitous, there is a growing risk of bias amplification. Small biases in training data or model design can get magnified as these systems make decisions that affect people's lives, leading to entrenched discrimination and inequality.

Uncovering the Hidden Biases

Identifying bias in AI is notoriously difficult, as the "black box" nature of many machine learning models makes it hard to trace the sources of problematic outputs. Researchers have had to develop sophisticated tools and techniques to audit these systems, from probing the training data to stress-testing model behavior on carefully curated test sets.

One promising approach is the use of adversarial machine learning, where researchers intentionally introduce challenging or even adversarial inputs to see how the AI system responds. This can help surface edge cases and uncover hidden biases.

"The more we can pull back the curtain on these AI systems, the better we can understand their flaws and limitations. Transparency and accountability are key to addressing algorithmic bias." - Dr. Jamila Michener, Cornell University

Addressing Bias Through Inclusive Design

Simply exposing bias is not enough – the real challenge lies in effectively mitigating it. One crucial step is to ensure that the teams developing AI systems are diverse and representative, bringing a range of perspectives to the table.

Additionally, there have been calls for more inclusive user-centered design practices, where AI is developed in close collaboration with the communities it will impact. This can help surface blind spots and ensure that the system's outputs and decisions align with the needs and values of diverse stakeholders.

Bias in AI is a complex, multifaceted issue that requires a holistic approach. Simply checking for disparate outcomes is not enough – we must also examine the data, models, and development processes that give rise to those outcomes.

Toward a More Equitable AI Future

As AI continues to shape more and more of our lives, the imperative to address algorithmic bias has never been greater. By shining a light on these hidden biases, developing robust auditing frameworks, and centering inclusive design, we have the opportunity to build AI systems that are truly just and equitable – not just in theory, but in practice.

It will be a long and challenging road, but the stakes are high. The future of AI is not set in stone – it is up to us to ensure that it is one where everyone, regardless of their background or identity, can have a fair and equal chance.

Comments