Comprehensive Frameworks For Auditing Ai Systems

A comprehensive deep-dive into the facts, history, and hidden connections behind comprehensive frameworks for auditing ai systems — and why it matters more than you think.

At a Glance

- Subject: Comprehensive Frameworks For Auditing Ai Systems

- Category: Artificial Intelligence, Technology, Governance

The Rise of AI Auditing Frameworks

As artificial intelligence systems have become increasingly complex, pervasive, and influential in our daily lives, the need for robust and comprehensive frameworks to audit and assess these systems has become a pressing concern. The rapid advancement of AI technology has outpaced the development of regulatory oversight, leaving many governments, organizations, and the public at large grappling with the ethical, social, and technical implications of AI systems.

In response to this challenge, a growing number of global initiatives and research efforts have emerged, each aiming to establish standardized approaches for auditing and evaluating the safety, fairness, and transparency of AI systems. These frameworks seek to provide a structured methodology for scrutinizing the inner workings of AI algorithms, their data inputs, and the potential societal impact of their decisions and outputs.

The Principles of Comprehensive AI Auditing

At the core of these comprehensive frameworks for auditing AI systems are several fundamental principles that aim to ensure the responsible and accountable use of AI technology:

- Transparency: AI systems should be designed and operated in a manner that allows for clear visibility into their inner workings, decision-making processes, and potential biases.

- Accountability: Individuals and organizations deploying AI systems must be held responsible for the impacts, both intended and unintended, that these systems have on individuals and society.

- Fairness and non-discrimination: AI systems should be designed and deployed in a way that promotes equity and prevents discrimination against individuals or groups based on protected characteristics such as race, gender, age, or disability.

- Robustness and security: AI systems must be resilient to manipulation, adversarial attacks, and other forms of malicious interference that could compromise their integrity or reliability.

- Respect for human rights: The development and use of AI systems should be aligned with fundamental human rights, including privacy, freedom of expression, and due process.

Prominent Comprehensive AI Auditing Frameworks

Several leading organizations and initiatives have developed comprehensive frameworks for auditing AI systems, each with its own unique approach and emphasis:

The NIST AI Risk Management Framework

The National Institute of Standards and Technology (NIST) in the United States has spearheaded the development of the NIST AI Risk Management Framework, which provides a structured process for identifying, assessing, and mitigating the risks associated with AI systems. The framework emphasizes the importance of stakeholder engagement, clearly defined roles and responsibilities, and the integration of risk management practices throughout the AI system lifecycle.

The IEEE Ethically Aligned Design Guidelines

The Institute of Electrical and Electronics Engineers (IEEE), a global professional organization, has published the Ethically Aligned Design guidelines, which outline a comprehensive set of principles and recommendations for the design, development, and deployment of AI systems that respect and promote human values and well-being. The guidelines cover a wide range of ethical considerations, from privacy and data rights to algorithmic bias and the impact on employment.

"The development of robust, transparent, and accountable AI systems is critical to ensuring that the benefits of this transformative technology are realized while mitigating the risks and potential harms." - Dr. Yoshua Bengio, renowned AI researcher and co-founder of the Montreal Institute for Learning Algorithms.

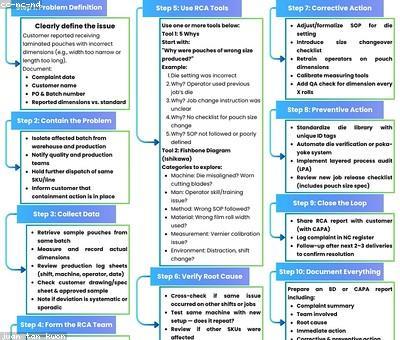

Auditing in Practice: Challenges and Considerations

Implementing comprehensive frameworks for auditing AI systems in practice presents a number of challenges and considerations that organizations must navigate:

Data Availability and Transparency

Conducting thorough audits of AI systems often requires access to a significant amount of data, including training data, model architectures, and detailed logging of system outputs and decision-making processes. However, many AI-powered products and services are considered proprietary, and organizations may be reluctant to share this information, citing commercial sensitivity or intellectual property concerns.

Expertise and Interdisciplinary Collaboration

Comprehensive AI auditing requires a diverse range of expertise, including machine learning, software engineering, data science, ethics, and domain-specific knowledge. Building interdisciplinary teams capable of conducting rigorous, holistic audits can be a significant challenge for many organizations, particularly smaller entities or those new to the field of AI governance.

The Future of Comprehensive AI Auditing

As the prominence and impact of AI systems continue to grow, the need for robust, comprehensive frameworks for auditing these technologies will only become more critical. In the years ahead, we can expect to see further developments and refinements in the approaches and methodologies used to assess the safety, fairness, and transparency of AI systems, driven by ongoing research, regulatory initiatives, and collaborative efforts between industry, academia, and civil society.

One key area of focus will likely be the integration of AI auditing frameworks into broader enterprise risk management and governance practices, ensuring that the assessment and mitigation of AI-related risks are seamlessly woven into the decision-making processes of organizations. Additionally, the development of specialized AI auditing tools and platforms, coupled with the establishment of professional certifications and standards, could help to streamline and democratize the auditing process, making it more accessible to a wider range of stakeholders.

Comments