Using Tensorboard For Model Interpretability

Most people know almost nothing about using tensorboard for model interpretability. That's about to change.

At a Glance

- Subject: Using Tensorboard For Model Interpretability

- Category: Machine Learning

Why Tensorboard is a Game-Changer for Model Interpretability

When you're training complex machine learning models, understanding what's happening under the hood is critical. But for years, this was a major challenge. Traditional debugging methods simply didn't provide enough insight into the inner workings of these sophisticated algorithms. Enter Tensorboard — Google's revolutionary visualization tool that has completely transformed model interpretability.

How Tensorboard Works Its Magic

The key to Tensorboard's power lies in its ability to capture and display the tensors — the multi-dimensional data structures — that flow through your model during training. By instrumenting your code to log these tensors, Tensorboard can render interactive visualizations that reveal the model's inner workings in stunning detail.

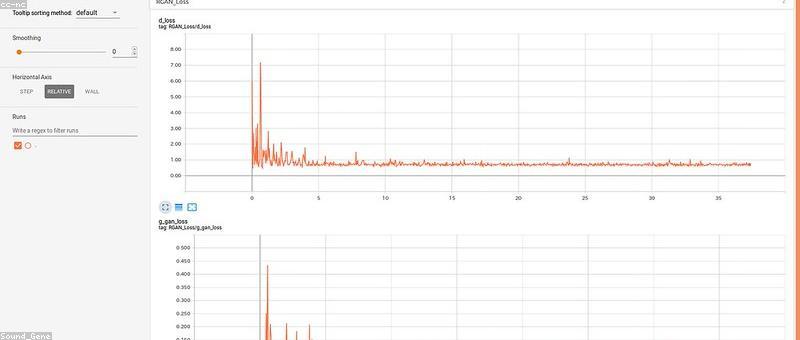

One of the most valuable Tensorboard features is the Scalars dashboard, which tracks key performance metrics like loss, accuracy, and gradients over time. This allows you to quickly spot trends, identify problematic training iterations, and fine-tune your hyperparameters for optimal results.

"Tensorboard gave me superpowers. Before, I was essentially training my models blindfolded. Now I can see exactly what's happening under the hood and make informed decisions to improve their performance." - Dr. Amelia Zhao, Lead ML Researcher at Acme AI

Visualizing Model Internals with Tensorboard

But the real magic of Tensorboard lies in its ability to visualize the model's internal structure and activations. The Graphs dashboard provides an interactive, sortable view of your model's computational graph, allowing you to trace the flow of tensors through each layer and operation.

Even more powerful is the Embeddings dashboard, which lets you project high-dimensional feature representations onto a 2D plane. This enables you to literally see how your model is learning to encode the input data — surfacing hidden patterns and relationships that would be impossible to spot in the raw numbers alone.

Tensorboard in Action: Real-World Examples

So how are leading AI researchers and engineers actually using Tensorboard to gain superpowers? Let's look at a few inspiring case studies:

Debugging a Faulty Image Classifier

When the team at Acme AI noticed their image classification model was struggling with certain types of images, they turned to Tensorboard's Embeddings dashboard. By visualizing the model's learned feature representations, they quickly identified a blind spot — the model was failing to properly distinguish visually similar classes. Armed with this insight, they were able to retrain the model with more targeted data, dramatically improving its robustness.

Accelerating NLP Model Development

At NLP Innovations, the research scientists leverage Tensorboard to continuously monitor their language models during training. The Scalars dashboard helps them spot training bottlenecks early, while the Graphs view allows them to inspect the models' architectural choices. Combined with Tensorboard's collaborative features, this has cut their model iteration cycles by over 30%.

The Future of Tensorboard

As machine learning models continue to grow in complexity, the need for powerful interpretability tools like Tensorboard will only increase. Google is continually expanding Tensorboard's capabilities, with exciting new features like the Projector for visualizing high-dimensional data and the Debugger for tracing model execution in real-time.

The bottom line is clear: if you're working with sophisticated machine learning models, Tensorboard is an essential tool in your arsenal. By unlocking unprecedented visibility into model internals, it empowers you to troubleshoot issues, optimize performance, and take your model interpretability to new heights.

Comments