The Neuroscience Of Moral Decision Making

The deeper you look into the neuroscience of moral decision making, the stranger and more fascinating it becomes.

At a Glance

- Subject: The Neuroscience Of Moral Decision Making

- Category: Neuroscience, Psychology, Ethics

The Trolley Problem and the Dual-Process Theory

At the heart of the neuroscience of moral decision making lies the infamous "trolley problem." This classic thought experiment presents people with a scenario where a runaway trolley is headed towards five people who will be killed unless you divert it onto another track, where it will kill one person instead. The majority of people say they would divert the trolley, sacrificing one life to save five. But ask them if they would push a large man off a footbridge to stop the trolley, and most recoil at the idea of directly causing someone's death, even if the outcomes are equivalent.

Researchers believe this "personal moral dilemma" taps into a deeper divide in the brain's decision-making processes. The "impersonal" trolley dilemma seems to engage more of our analytical, utilitarian reasoning, while the "personal" footbridge scenario triggers a stronger emotional response and aversion to direct harm. This "dual-process theory" of moral judgment posits that we rely on both fast, automatic emotional intuitions and slower, more controlled cognitive deliberation when facing tough moral choices.

The Trolley Dilemma in the Real World

While the trolley problem may seem like a far-fetched theoretical exercise, it has surprising real-world relevance. As autonomous vehicles become more common, they will inevitably face situations where their programming will have to make split-second decisions about who or what to prioritize in an accident. Should a self-driving car swerve to hit a jaywalker instead of the car's passenger? Philosophers and policymakers are grappling with these thorny ethical quandaries.

Even outside of technology, the trolley dilemma crops up in other high-stakes contexts. Medical triage situations, military operations, disaster response — all can require difficult choices about who to save and who to sacrifice. And research suggests that people's responses to these dilemmas are heavily influenced by factors like their emotional state, cultural background, and even the wording of the question.

"We're not just cold, calculating machines. Moral decision-making involves a complex interplay of cognition and emotion that neuroscience is only beginning to unravel."

The Role of Empathy

Empathy, or the ability to understand and share the feelings of others, also plays a crucial role in moral decision-making. Studies have found that people who score higher on measures of empathy are more likely to make utilitarian choices in moral dilemmas, prioritizing the greater good over their own emotional aversion to causing direct harm.

Interestingly, research also suggests that psychopaths and people with certain forms of brain damage that impair empathy are more likely to make coldly utilitarian decisions. Their lack of emotional resonance with the victims makes it easier for them to choose the option that maximizes the number of lives saved.

The Neurobiology of Moral Judgment

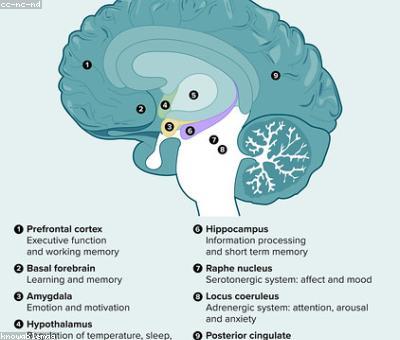

Advances in neuroimaging and lesion studies have shed light on the specific brain regions involved in moral decision-making. The ventromedial prefrontal cortex, anterior cingulate cortex, and amygdala seem to play key roles in integrating emotional responses, social cognition, and moral reasoning.

For example, patients with damage to the ventromedial prefrontal cortex often exhibit impaired moral judgment, showing a reduced aversion to causing direct personal harm. Conversely, heightened activity in the amygdala is associated with stronger emotional responses to morally salient scenarios.

Importantly, these brain regions don't work in isolation. Moral judgments emerge from the dynamic interplay of multiple neural systems, shaped by our individual differences in personality, life experiences, and cultural values.

The Neuroscience of Moral Development

Morality is not static – it evolves over the course of our lives. Neuroscientific research has shed light on the neural underpinnings of this moral development. During childhood and adolescence, the prefrontal cortex and other brain regions involved in impulse control, decision-making, and social cognition undergo significant structural and functional changes.

As we grow older, our moral judgments become more sophisticated, moving from a focus on obedience and self-interest towards more abstract principles of justice, fairness, and harm prevention. Longitudinal studies have found that this maturation of moral reasoning is reflected in shifting patterns of brain activity, with increased recruitment of cognitive control regions and decreased reliance on emotional impulses.

The Future of Moral Neuroscience

As our understanding of the neuroscience of moral decision-making continues to grow, it raises a host of intriguing philosophical and ethical questions. Could we one day use brain scans to predict how people will respond to moral quandaries? Should we adjust our legal and social institutions to account for the emotional and cognitive biases underlying moral judgments? And what are the implications for moral responsibility and free will?

While the answers to these questions remain elusive, the field of moral neuroscience is poised to play a vital role in shaping our collective understanding of what it means to be a moral being. By illuminating the complex interplay of reason and emotion in our ethical decision-making, it offers a window into the very heart of human nature.

Comments