The Explainability Imperative Making Ai Decisions Transparent And Accountable

the explainability imperative making ai decisions transparent and accountable is one of those subjects that seems simple on the surface but opens up into an endless labyrinth once you start digging.

At a Glance

- Subject: The Explainability Imperative Making Ai Decisions Transparent And Accountable

- Category: Artificial Intelligence, Machine Learning, Ethics, Transparency

The Rise of the Black Box

As artificial intelligence and machine learning systems have become increasingly sophisticated and ubiquitous, they have also become more opaque and difficult to understand. Many of today's most powerful AI models are "black boxes" – complex neural networks whose inner workings and decision-making processes are obscured from human view. This poses a serious challenge when it comes to the ethical deployment of AI, as we can no longer simply trust that the system is making fair and accountable decisions.

The Call for Explainable AI

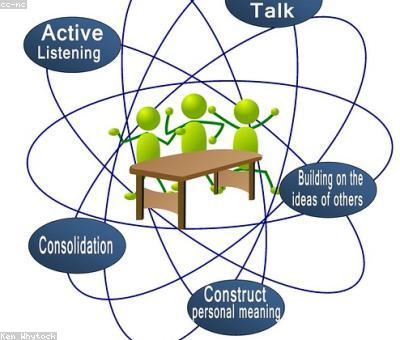

This lack of transparency has sparked a growing movement for "explainable AI" (XAI) – AI systems whose decision-making can be clearly understood and audited by human operators. Proponents of XAI argue that as AI becomes increasingly integrated into high-stakes domains like healthcare, finance, and criminal justice, it is essential that these systems be accountable and their decisions justifiable.

The push for explainable AI has gained momentum in recent years, with researchers, policymakers, and ethicists all weighing in on the importance of this issue. Many are calling for new regulatory frameworks that would mandate transparency and interpretability for AI systems, particularly in areas that impact people's lives and livelihoods.

Techniques for Explainable AI

Fortunately, there are a number of technical approaches that can be used to make AI systems more explainable. These include:

- Interpretable Model Design: Creating AI models whose inner workings are more easily understood, such as decision trees or linear regression models, rather than complex neural networks.

- Post-Hoc Explanation: Developing "explainer" models that can analyze a black-box AI system and provide explanations for its outputs.

- Proactive Transparency: Designing AI systems to automatically generate explanations for their decisions, either in natural language or through visualizations.

Ethical Implications of XAI

The rise of explainable AI also raises important ethical questions. How much transparency is enough? What constitutes a "good" explanation, and who gets to define that? There are also concerns about the potential for explainability techniques to be misused, with bad actors gaming the system or manipulating the explanations.

"Explainable AI is not just a technical challenge – it's a moral imperative. As AI becomes more powerful and pervasive, we have an ethical obligation to ensure that these systems are operating with transparency and accountability." - Dr. Emily Bender, Professor of Computational Linguistics, University of Washington

The Path Forward

Despite the complexities involved, the push for explainable AI is a necessary and important one. As AI continues to shape more and more of our lives, it is crucial that we develop the tools and frameworks to ensure that these systems are making decisions in a fair, ethical, and accountable manner.

By prioritizing explainability, we can unlock the full potential of AI while maintaining human control and oversight. This will require collaboration between technologists, policymakers, ethicists, and the public – but the stakes are too high to settle for anything less than true transparency and accountability.

Comments