Perceptron

Everything you never knew about perceptron, from its obscure origins to the surprising ways it shapes the world today.

At a Glance

- Subject: Perceptron

- Category: Computer Science, Artificial Intelligence

The Forgotten Origins of the Perceptron

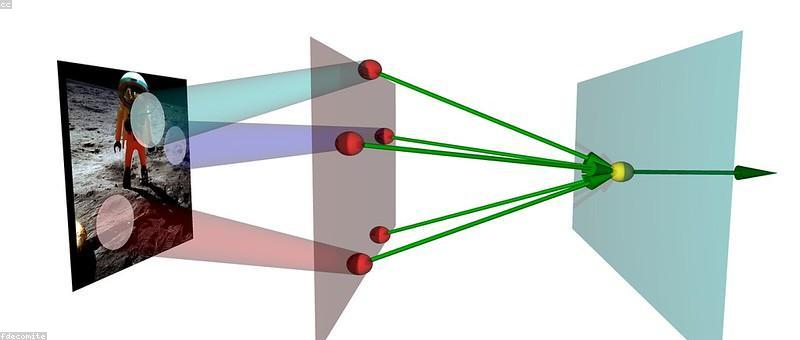

While the perceptron is now central to the field of machine learning, its origins can be traced back to a little-known 1940s experiment by a Polish-American psychologist named Frank Rosenblatt. Rosenblatt, working at the Cornell Aeronautical Laboratory, was fascinated by the human brain's incredible ability to recognize patterns and make decisions. He hypothesized that by mimicking the structure and function of neurons, he could create a machine capable of "learning" in a similar way.

In 1957, Rosenblatt unveiled his creation: the perceptron, a simple neural network algorithm that could be trained to classify input data into different categories. The initial results were nothing short of remarkable. When shown thousands of images of shapes, the perceptron could accurately identify and distinguish between them with uncanny precision.

The Perceptron's Dark Years

Despite its early promise, the perceptron soon fell out of favor in the field of AI. In 1969, a landmark paper by Marvin Minsky and Seymour Papert exposed fundamental limitations in the perceptron's ability to solve certain types of problems, such as the "XOR" logic gate. This led to a dramatic decline in funding and research into neural network algorithms, ushering in what became known as the "AI winter" of the 1970s and 80s.

For years, the perceptron was dismissed as a flawed and outdated technology, overshadowed by the rise of rule-based expert systems and symbolic AI. It seemed that Rosenblatt's creation was destined to be relegated to the annals of history.

"The perceptron, by itself, cannot be taught to perform equivalence problems, such as the exclusive-or function." - Marvin Minsky and Seymour Papert, 1969

The Perceptron's Triumphant Return

Yet the perceptron's story was far from over. In the 1980s and 90s, a new generation of researchers began to revisit and expand upon Rosenblatt's work, fueled by advances in computing power and the availability of large datasets. Multilayer perceptrons, backpropagation algorithms, and other neural network architectures emerged, overcoming the limitations identified by Minsky and Papert.

Today, the perceptron lies at the heart of many of the most powerful machine learning models, from deep learning systems that can caption images to natural language processing models that can engage in human-like dialogue. The algorithm's ability to "learn" from data has made it an indispensable tool in fields ranging from computer vision and speech recognition to medical diagnosis and financial forecasting.

The Future of the Perceptron

As artificial intelligence continues to advance at a breakneck pace, the humble perceptron remains a fundamental building block of the field. Researchers are constantly exploring new ways to enhance the algorithm's capabilities, from incorporating memory and feedback mechanisms to combining it with other machine learning techniques.

Moreover, the principles that underpin the perceptron – the notion of a network of interconnected nodes that can learn to recognize patterns – have inspired a new generation of neuromorphic computing architectures, which aim to mimic the brain's neural structure and information processing.

In many ways, the story of the perceptron mirrors the broader arc of artificial intelligence itself – a tale of triumph, setback, and ultimate redemption. But as the technology continues to evolve, one thing is clear: the perceptron's influence will only grow, shaping the way we understand and interact with the world around us.

Comments