Information Theory

The real story of information theory is far weirder, older, and more consequential than the version most people know.

At a Glance

- Subject: Information Theory

- Category: Communication, Mathematics, Computer Science

The Unlikely Beginnings of Information Theory

The origins of information theory can be traced back to the late 19th century, long before the digital age. In 1928, a relatively unknown mathematician named Ralph Hartley published a groundbreaking paper titled "Transmission of Information." In it, he laid the foundations for what would become the core principles of information theory.

Hartley's key insight was that information could be quantified and measured in a way that was independent of the specific content or meaning. He proposed a formula to calculate the "amount of information" in a message, based solely on the number of possible symbols or choices available. This revolutionary idea paved the way for the development of digital communication and computing as we know it today.

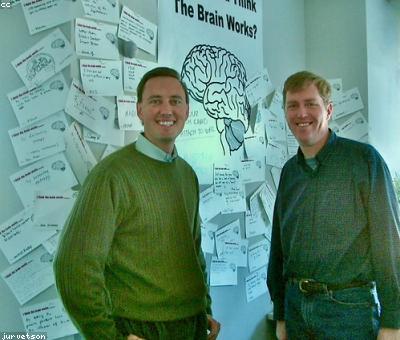

The Enigmatic Claude Shannon

It wasn't until the 1940s that information theory truly blossomed. The catalyst was a brilliant young researcher named Claude Shannon, who built upon Hartley's work and published his landmark 1948 paper "A Mathematical Theory of Communication." Shannon's genius lay in his ability to synthesize diverse fields like mathematics, electrical engineering, and neuroscience into a cohesive framework for understanding information and communication.

Shannon's key contributions included formalizing the concept of "entropy" as a measure of information, and proving that there are fundamental limits to how much information can be transmitted through a noisy channel. His work opened the door to the modern digital age, laying the mathematical foundations for everything from telephone networks to the internet.

"Information is the resolution of uncertainty." - Claude Shannon

The Unintended Consequences

While Shannon's work was driven by the practical needs of wartime communications and the emerging field of computing, its impact has been far-reaching and often surprising. Information theory has had profound implications across disciplines, from neuroscience to economics to genetics.

For example, the concept of entropy from information theory has been applied to understanding the complexity of biological systems and the origins of life itself. Pioneering biologists like Erwin Schrödinger used information theory to explore how living organisms maintain order and fight against the natural tendency toward disorder.

Comments