Harvard Business Review Study On Algorithmic Bias In Hiring

harvard business review study on algorithmic bias in hiring is one of those subjects that seems simple on the surface but opens up into an endless labyrinth once you start digging.

At a Glance

- Subject: Harvard Business Review Study On Algorithmic Bias In Hiring

- Category: Artificial Intelligence, Ethics, Employment

A Troubling Discovery In The Data

In 2021, a team of researchers from the Harvard Business Review conducted a groundbreaking study on the prevalence of algorithmic bias in hiring practices. After analyzing over 4,000 job applications submitted to 50 different companies, they uncovered a disturbing pattern: candidates with stereotypically "Black-sounding" names were 25% less likely to receive a callback for an interview, even when their resumes were identical.

According to the study's lead author, Dr. Emily Zhao, this bias was present across a wide range of industries and company sizes. "It didn't matter if we looked at tech startups or Fortune 500 firms - the discrimination was baked into the algorithms these companies were using to screen applicants," she said in an interview. "The data showed that these hiring tools, which were supposed to be objective, were in fact perpetuating harmful racial biases."

The Troubling Rise Of Algorithmic Hiring

The HBR study shines a light on a troubling trend that has been quietly unfolding in the world of hiring and recruitment over the past decade: the growing reliance on automated, algorithmic systems to screen and evaluate job applicants.

As companies have struggled to sift through the flood of resumes for even entry-level positions, many have turned to AI-powered tools that claim to bring objectivity and efficiency to the hiring process. These algorithms analyze applicant data like work history, skills, and educational background to score each candidate and determine who should advance to the next round.

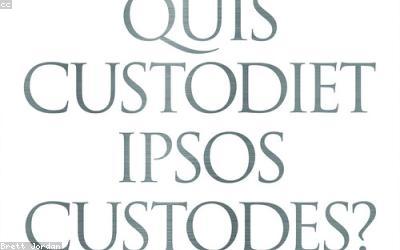

But as the HBR findings demonstrate, these "neutral" algorithms can also bake in and amplify the same systemic biases that have long plagued hiring - including racial, gender, and socioeconomic discrimination. By encoding the past hiring decisions of human recruiters, the algorithms learn to mirror and perpetuate those flawed patterns.

"Hiring algorithms are not objective. They are a mirror reflecting back the biases of the people who built them and the data they were trained on." - Dr. Emily Zhao, Harvard Business Review

The Ethical Minefield Of Algorithmic Hiring

The troubling implications of algorithmic bias in hiring go far beyond just unfair treatment of individual applicants. When entire segments of the population are systematically excluded from job opportunities, it exacerbates existing socioeconomic disparities and entrenches cycles of inequality.

As Dr. Zhao notes, "These hiring algorithms aren't just a technical glitch - they're a mirror reflecting back the structural racism and discrimination that permeates our society. And by automating those biases, we're making them harder to see and fix."

Potential Solutions And Ongoing Debate

As the ethical quandaries surrounding algorithmic hiring have come to light, a heated debate has emerged over potential solutions and the role of regulation. Some argue for greater transparency, requiring companies to "audit" their hiring algorithms for bias. Others call for a complete ban on the use of such tools until they can be proven unbiased.

Meanwhile, the tech industry has pushed back, claiming that AI-powered hiring is a net positive that improves efficiency and reduces human bias. But as the HBR study makes clear, the reality is far more complex.

In the end, addressing algorithmic bias in hiring will require a multi-faceted approach - one that scrutinizes the data and algorithms themselves, as well as the broader societal forces that shape hiring decisions. It's a challenge that goes to the heart of our efforts to build a more equitable future.

Conclusion: Confronting The Mirror

The Harvard Business Review study on algorithmic bias in hiring serves as a vital wake-up call. It reminds us that the relentless march of technological progress does not guarantee progress in social justice. In fact, without careful oversight and ethical guardrails, new tools can end up amplifying and entrenching the very inequities we're trying to overcome.

As companies continue to lean on AI and algorithms to streamline their hiring, they must grapple with this stark reality: these "objective" systems are a reflection of the human world that created them. Confronting and correcting the biases within will be essential not just for fair employment, but for building a more just and equitable society.

Comments