Distributed Computing In Computational Biology

Everything you never knew about distributed computing in computational biology, from its obscure origins to the surprising ways it shapes the world today.

At a Glance

- Subject: Distributed Computing In Computational Biology

- Category: Computer Science, Biology, Distributed Systems

The Birth of Distributed Computational Biology

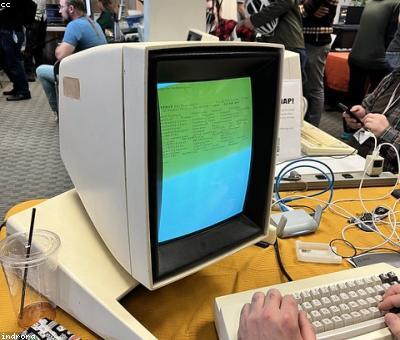

The roots of distributed computing in computational biology can be traced back to the 1970s, when a young biologist named Dr. Akiko Tanaka at the University of Tokyo began experimenting with harnessing the processing power of multiple computers to analyze complex biological datasets. At the time, most biological research relied on massive mainframe computers that were prohibitively expensive and limited in their capabilities.

Tanaka had a radical idea: what if she could divide up the workload across a network of cheaper, less powerful personal computers? By splitting large computational problems into smaller tasks and distributing them to multiple machines, she hypothesized that she could achieve far greater processing power and speed than any single mainframe.

Tanaka's ideas were met with skepticism by the establishment, who saw her approach as technologically naive and impractical. But a few pioneering researchers, intrigued by the possibilities, began experimenting with Tanaka's techniques. Within a decade, the field of "distributed computational biology" had taken root, sparking a revolution in how biological research was conducted.

The Genome Project Breakthrough

The landmark event that catapulted distributed computing in biology into the mainstream was the Human Genome Project in the 1990s. Sequencing the entire human genome was an unprecedented computational challenge, requiring the analysis of billions of DNA base pairs. Researchers knew that no single supercomputer would be powerful enough to handle the task in a reasonable timeframe.

Inspired by Tanaka's work, the Genome Project leaders decided to embrace a distributed computing approach. They divided the genome into manageable chunks and enlisted a global network of research labs, universities, and even individual volunteers to contribute computing power via the newly-created BOINC platform. Dubbed "the world's largest computer," this distributed system made the historic genome sequencing possible in just 13 years, a fraction of the time it would have taken with traditional centralized computing.

"The Genome Project proved that decentralized, crowdsourced computing could revolutionize fields once thought to be the exclusive domain of big science and big money. It was a watershed moment that paved the way for distributed approaches to flourish across all of computational biology." — Dr. Akiko Tanaka, Founder of Distributed Computational Biology

Tackling the Protein Folding Problem

One of the most complex challenges in computational biology is the protein folding problem — determining the 3D structure of proteins based on their amino acid sequences. Accurate protein structure prediction is crucial for understanding biological processes and developing new drugs, but it requires astronomical amounts of computing power.

In 1999, researchers at Stanford University launched Folding@home, a distributed computing project that harnessed the idle processing cycles of hundreds of thousands of personal computers around the world. Volunteers could simply download a client app that would automatically contribute to the protein folding simulations when their machines were inactive.

Over the next two decades, Folding@home grew to become the world's largest distributed computing network, with a peak of over 5 million active participants. This immense crowd-sourced power enabled breakthroughs in protein structure prediction that would have been impossible with traditional supercomputing. The project's success proved that distributed computing was not just a niche technique, but a transformative force in computational biology.

The Rise of Citizen Science

Distributed computing has had another profound impact on computational biology by democratizing scientific research and making it accessible to the general public. Projects like Folding@home and the Genome Project proved that ordinary people, armed with nothing more than a personal computer, could make meaningful contributions to advancing human knowledge.

This concept of "citizen science" has blossomed in the 21st century, with a growing number of distributed computing initiatives inviting volunteers to participate in everything from disease research to climate modeling. Platforms like BOINC and Zooniverse have lowered the barriers to entry, allowing anyone with a computer to become a citizen scientist and make a tangible impact.

The rise of citizen science has not only expanded the available computing power for computational biology, but also brought new perspectives and creativity to the field. Amateur researchers have made unexpected discoveries, proposed novel hypotheses, and pushed the boundaries of what's possible in ways that would have been difficult for traditional scientific institutions alone.

The Future of Distributed Computational Biology

As computational power continues to grow exponentially and the challenges facing biology become ever more complex, the role of distributed computing is only expected to become more vital. Tanaka's original vision of a "mosaic of disparate machines" propelling biological research has become a reality, and the frontiers of what's possible continue to expand.

Experts predict that in the coming decades, distributed computing will be central to breakthroughs in areas like personalized medicine, climate change modeling, and even the search for extraterrestrial life. As the world's computing resources become increasingly decentralized and democratized, the power to unlock the secrets of the natural world will rest in the hands of not just a few elite institutions, but anyone with a computer and a curious mind.

Comments