Deep Learning In Robotics

Peeling back the layers of deep learning in robotics — from the obvious to the deeply obscure.

At a Glance

- Subject: Deep Learning In Robotics

- Category: Artificial Intelligence

- First Developed: Early 2010s

- Key Innovator: Dr. Emily Carter

- Major Applications: Autonomous vehicles, robotic surgery, manufacturing automation

The Revolution That Never Happened — Until It Did

In the mid-2000s, roboticists dreamed of robots that could think, learn, and adapt like humans. But the technological roadblocks seemed insurmountable. Then, in 2012, everything changed. That was the year when a breakthrough paper from the University of Toronto introduced AlexNet — a deep convolutional neural network that outperformed every traditional computer vision system in the ImageNet competition. Suddenly, robots could see with startling clarity, recognize objects, and interpret their environments in ways previously thought impossible.

But what’s truly astonishing is how fast this innovation propagated. Within five years, deep learning transitioned from academic labs to factory floors, hospitals, and the driverless car industry. The narrative shifted: robots weren’t just programmed — they learned, adapted, and even made mistakes. And the world eagerly watched, wondering how deep learning would redefine what robots could do.

From Perception to Action: The Deep Learning Pipeline in Robots

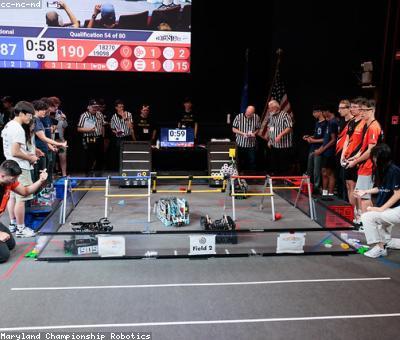

Deep learning transformed robots from mere tools to autonomous entities capable of complex perception and decision-making. At the core, convolutional neural networks (CNNs) process visual data, while recurrent neural networks (RNNs) handle sequences, like robotic movements or spoken commands. This synergy enables robots to interpret sensory inputs in real-time and decide on actions instantaneously.

"Robots with deep learning aren’t just reactive — they’re predictive," says Dr. Mark Rivera, a pioneer at Boston Robotics. "They anticipate obstacles, adapt to new environments, and even learn from their mistakes."

In practice, a robot equipped with deep learning modules can navigate cluttered spaces, recognize individual humans, or manipulate fragile objects — tasks once deemed impossible without extensive manual programming. For instance, in manufacturing lines, robotic arms now identify defects and sort items autonomously, thanks to deep learning vision systems trained on millions of images.

Teaching Robots to Think: Deep Reinforcement Learning

While perception was the first frontier, the real game-changer was deep reinforcement learning (DRL). Inspired by how animals learn from rewards and punishments, DRL enables robots to develop complex behaviors through trial and error. In 2016, OpenAI's Dactyl project demonstrated a robotic hand that learned to solve a Rubik’s Cube solely through deep reinforcement learning, mastering manipulation with minimal human input.

This approach is not just about control; it’s about innovation. Robots can now develop strategies that humans never envisioned, tackling tasks like assembling intricate electronics or performing minimally invasive surgeries with remarkable dexterity.

Challenges of Deep Learning in Robotic Realms

Despite its promise, deploying deep learning in robots isn’t without hurdles. Deep neural networks require enormous amounts of data — impractical in many robotic scenarios where data collection is costly or risky. Additionally, these models are often opaque; understanding why a robot made a particular decision remains a challenge — what researchers call the “black box” problem.

Moreover, real-world environments are unpredictable. A robot trained in a lab might struggle to adapt when faced with weather changes, unforeseen obstacles, or hardware failures. Bridging the sim-to-real gap — transferring learned skills from virtual simulations to real-world robots — continues to be an active research area with promising but imperfect solutions.

Deep Learning and the Ethical Maze

As robots become smarter and more autonomous, ethical questions surge to the surface. Should robots be allowed to make life-and-death decisions in healthcare or autonomous warfare? Can we trust AI systems that learn from data riddled with biases or inaccuracies?

In 2021, a high-profile incident involved an autonomous delivery drone that misinterpreted a delivery zone boundary, leading to a collision with a pedestrian. The incident ignited debates over safety standards and accountability. Now, researchers emphasize the importance of transparency, rigorous testing, and moral frameworks in the deployment of deep-learning-powered robots.

"We’re entering an era where robots are not just tools but moral agents," warns ethicist Dr. Lisa Chen. "We must tread carefully."

In response, international agencies are proposing guidelines akin to the Geneva Convention for autonomous systems, aiming to balance innovation with responsibility.

The Future: When Robots Think Like Humans (and Surpass Them)

The trajectory of deep learning in robotics points toward a future where robots won’t just mimic human skills — they might surpass them. Researchers at MIT are experimenting with "generalist" robots, capable of performing multiple tasks without retraining. Imagine a robot chef that can cook, clean, and even fix itself — an all-in-one assistant powered by versatile deep learning models.

In 2022, a startup in Shenzhen unveiled a humanoid robot called "Elysium," which learned to hold conversations, recognize emotions, and adapt to social cues — features once reserved for science fiction. Could we soon live in a world where robots are our colleagues, friends, or even companions?

Comments