Algorithmic Redlining

Everything you never knew about algorithmic redlining, from its obscure origins to the surprising ways it shapes the world today.

At a Glance

- Subject: Algorithmic Redlining

- Category: Technology, Bias, Discrimination

The Unseen Hand: How Algorithms Quietly Control Where You Live

Imagine a world where your zip code can determine your entire future. Where the color of your skin decides the opportunities available to you, without you ever realizing it. This is the hidden reality of algorithmic redlining – the startling phenomenon where automated decision-making systems reinforce centuries-old patterns of racial and economic segregation.

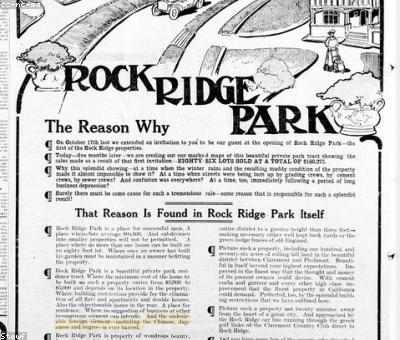

It began innocuously enough, with the rise of credit scoring algorithms in the 1970s. These mathematical models, designed to assess individual creditworthiness, were intended to make lending more efficient and fair. But in the hands of an institutionally-biased financial system, they quickly became tools of exclusion. Subtle biases in the data and design of these algorithms led them to systematically disadvantage borrowers from minority neighborhoods, trapping entire communities in cycles of poverty and unequal access.

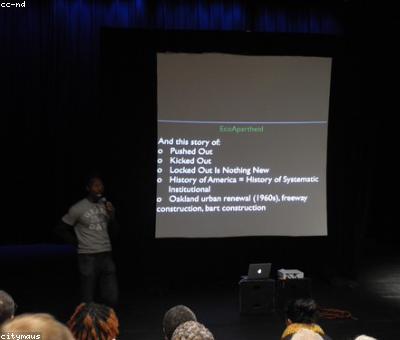

The problem only snowballed as these automated decision-makers wormed their way into ever more aspects of modern life. Hiring algorithms sort job applicants. Predictive policing algorithms decide where law enforcement resources are deployed. Housing algorithms determine who gets approved for mortgages and rentals. At each step, the invisible biases baked into the algorithms' training data and design choices translated into very real, very human consequences – shaping opportunity, mobility, and entire life trajectories along racial and socioeconomic lines.

The Unintended Consequences of Automation

Perhaps the most insidious aspect of algorithmic redlining is how unintentional it often is. The engineers and data scientists behind these systems are rarely malicious actors. They are simply trying to optimize for efficiency, scalability, and the appearance of fairness. But because they are working within a society already rife with structural inequalities, their technical "solutions" end up magnifying and perpetuating those very problems.

"The math doesn't lie – but the people who design the math, and the assumptions they've built in, very much do." — Dr. Safiya Umoja Noble, author of Algorithms of Oppression

Take the example of "risk-based pricing" in the insurance industry. Algorithms that charge higher premiums to customers in high-crime neighborhoods may seem rational from an actuarial standpoint. But in reality, these neighborhoods are more often low-income communities of color that have faced generations of disinvestment and over-policing. The result? Families in these areas pay more for essential coverage, compounding the financial strain they already face.

Fighting Back Against the Machine

Dismantling the complex web of algorithmic redlining will require a multifaceted approach. First and foremost, we need greater transparency and accountability around the data, models, and decision-making processes underlying these systems. Companies and governments must be compelled to open the black box, revealing the hidden biases and flaws that are having such profound impacts on people's lives.

Simultaneously, we need to invest in developing fair, equitable AI that is explicitly designed to counteract historical discrimination, not perpetuate it. This means diversifying the teams building these algorithms, interrogating the data they're trained on, and implementing robust testing and auditing measures to identify and mitigate biased outputs.

Most critically, we must change the way we think about these automated decision-makers. They are not neutral, objective tools – they are products of a society with a long history of systemic racism and economic injustice. Confronting algorithmic redlining requires reckoning with those deeper, thornier issues at the heart of our communities.

A Future Beyond Bias

The promise of algorithmic systems was supposed to be a more just, efficient world. But instead, we've inadvertently created new digital infrastructures of oppression – replicating and amplifying the same biases that have plagued us for centuries.

Yet, there is hope. By shining a light on these invisible forces, we can begin to dismantle them. A future where technology is a tool of empowerment, not exclusion, is within our grasp. It will take hard work, vigilance, and a willingness to confront uncomfortable truths. But the alternative – allowing algorithms to silently shape the arc of human lives – is simply too high a price to pay.

Comments