Algorithmic Bias Ethics

Everything you never knew about algorithmic bias ethics, from its obscure origins to the surprising ways it shapes the world today.

At a Glance

- Subject: Algorithmic Bias Ethics

- Category: Technology, Ethics, AI

The Overlooked Origins of Algorithmic Bias

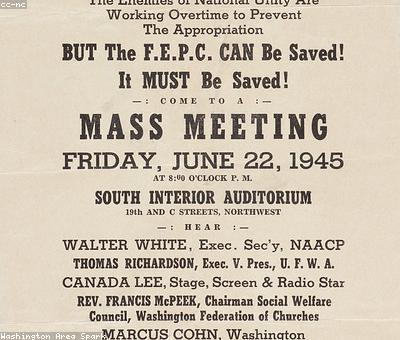

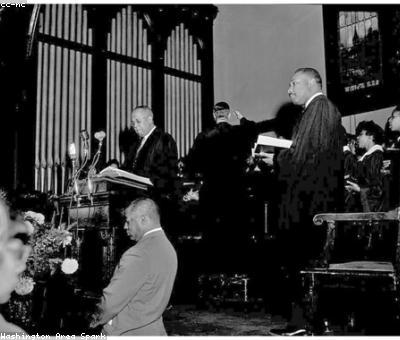

Far from being a modern phenomenon, the ethical challenges posed by algorithmic bias can be traced back to the 1950s – long before the first electronic computer was even built. In 1953, a young mathematician named Dorothy Vaughan published a groundbreaking paper outlining the potential risks of biased data informing complex decision-making systems. Vaughan, a pioneering African-American woman working at NASA Langley, warned that "the machines we create will only be as unbiased as the humans who program them."

Vaughan's warnings went largely unheeded for decades, as the computing revolution exploded with little regard for the ethical pitfalls. It wasn't until the 2010s that public awareness of algorithmic bias finally reached a tipping point, sparked by high-profile incidents like Google's photo tagging algorithm labeling Black people as "gorillas" and Amazon's AI recruitment tool discriminating against women.

The Insidious Ways Bias Creeps Into Algorithms

At its core, the problem of algorithmic bias stems from the simple fact that algorithms are created by humans – and humans are inherently biased, whether we realize it or not. Even the most well-intentioned data scientists and engineers can inadvertently bake their own unconscious biases into the algorithms they design.

One of the most common ways bias manifests is through the training data used to "teach" machine learning models. If the data used to train an algorithm is skewed or unrepresentative – for example, if a facial recognition system is trained primarily on images of white faces – the resulting algorithm will inevitably struggle to accurately identify people of color. This issue is compounded by the fact that many of the largest datasets used to train AI systems were created by tech companies with glaring diversity problems in their own workforces.

"Algorithms are not neutral. They are Trojan horses carrying the priorities and prejudices of their creators." - Cathy O'Neil, author of "Weapons of Math Destruction"

But biased data is just the tip of the iceberg. Algorithmic bias can also arise from the subjective choices made by programmers, such as how to define "success" or "relevance" within a system, or which variables to include (or exclude) when building a predictive model. Even the wording of questions asked in a user interface can inadvertently steer results in a certain direction.

The High Stakes of Algorithmic Bias

The consequences of unchecked algorithmic bias can be severe, with life-altering impacts on individuals and communities. In the criminal justice system, biased risk assessment algorithms have been shown to unfairly recommend harsher sentences for Black defendants. In financial services, discriminatory lending algorithms have denied mortgages to qualified borrowers of color. And in healthcare, flawed algorithms have underestimated the medical needs of marginalized populations.

Ethical Frameworks for Responsible AI

As the risks of algorithmic bias have become increasingly clear, a growing movement of technologists, ethicists, and policymakers have worked to develop frameworks for building more responsible and equitable AI systems. Key principles include:

- Diversity and Inclusion: Ensuring the teams developing AI are representative of the populations those systems will impact.

- Transparency and Accountability: Requiring algorithm creators to document their design choices and testing processes.

- Ongoing Monitoring and Adjustment: Continuously evaluating AI systems for emerging biases and making adjustments.

- Human Oversight and Appeal Processes: Maintaining human review for high-stakes decisions and giving affected individuals a way to contest algorithm-based determinations.

While putting these principles into practice remains an ongoing challenge, there are signs of progress. In 2019, the European Union unveiled sweeping new regulations to govern the development and use of AI, with strict requirements around algorithmic fairness and transparency. And major tech companies like Microsoft and Google have also begun publishing detailed ethical frameworks for their own AI projects.

The Future of Algorithmic Bias Ethics

As AI systems become increasingly pervasive in our daily lives – powering everything from social media feeds to criminal risk assessments – the stakes for getting algorithmic bias right have never been higher. While much work remains to be done, the growing awareness and concerted efforts around this issue offer hope that the fundamental human rights challenges posed by biased algorithms can be addressed.

Ultimately, the future of algorithmic bias ethics will hinge on our ability to build AI that is not only technologically sophisticated, but also grounded in principles of fairness, transparency, and accountability. Only by keeping the human element at the center of these systems can we hope to create a future where the machines we build truly serve the greater good.

Comments