Ai Regulations

ai regulations sits at the crossroads of history, science, and human curiosity. Here's what makes it extraordinary.

At a Glance

- Subject: Ai Regulations

- Category: Technology, Artificial Intelligence, Law and Policy

- Key Origins: The rise of powerful AI systems in the early 21st century, growing public concern over the risks of unregulated AI, and the need to establish ethical guidelines and legal frameworks.

The Urgent Need for Ai Regulations

In the early decades of the 21st century, the rapid advancements in artificial intelligence (AI) technology sparked both excitement and trepidation. As AI systems became increasingly sophisticated, capable of tackling complex tasks once thought the sole domain of human intelligence, concerns grew over the potential risks and unintended consequences of unchecked AI development.

Futurists and policymakers raised alarms about the existential threat of "superintelligent" AI, which could surpass human capabilities and act in ways detrimental to humanity. There were also pressing issues around AI algorithms perpetuating societal biases, the displacement of human workers, autonomous weapons, and the erosion of privacy and personal data rights.

Forging a Global Regulatory Framework

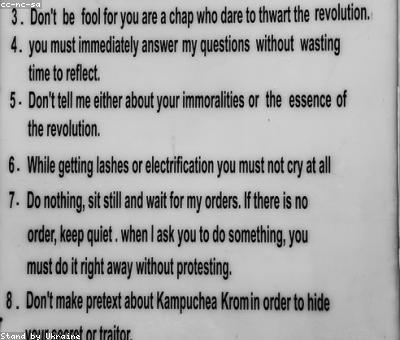

As the potential perils of unchecked AI became increasingly clear, governments and international organizations recognized the urgent need to establish a regulatory framework. In 2021, the European Union took a significant step forward with the proposal of the Artificial Intelligence Act (AIA), a sweeping set of rules and restrictions aimed at mitigating the risks of AI.

The AIA proposed a tiered system of AI regulations, categorizing different AI applications as "unacceptable risk," "high risk," or "low risk." Unacceptable-risk AI, such as social scoring systems and real-time biometric identification, would be outright banned. High-risk AI, including systems used in healthcare, law enforcement, and employment, would be subject to strict requirements around transparency, human oversight, and data quality. Meanwhile, low-risk AI would be largely left to self-regulation.

"The Artificial Intelligence Act is a landmark piece of legislation that will set the global standard for AI governance. Its comprehensive approach to regulating high-risk AI applications is a crucial step in ensuring that technological progress aligns with fundamental human rights and democratic values." - Ursula von der Leyen, President of the European Commission

The Race to Catch Up

While the EU took the lead in AI regulation, other nations quickly followed suit. In 2022, the United States unveiled its own AI Bill of Rights, outlining principles to protect individuals from the risks of AI systems. China, Russia, and other major powers also began developing their own national AI strategies and regulatory frameworks.

The race to establish AI governance was further propelled by the increasing globalization of the technology industry. As AI-powered products and services transcended national borders, the need for international coordination and cooperation became paramount. Organizations like the OECD, the UN, and the G7 convened dialogues to develop shared principles and best practices for AI regulation.

The Ongoing Challenges

As AI regulations continue to evolve, a number of complex challenges remain. Keeping pace with the rapid technological advancements in AI is a constant struggle, as regulations risk becoming outdated before they can even be implemented. There are also thorny questions around the appropriate levels of government intervention, the balance between innovation and risk mitigation, and the enforcement of cross-border compliance.

Furthermore, the global nature of the AI industry means that even the most comprehensive national regulations can be undermined by the relocation of AI development and deployment to less-regulated jurisdictions. Addressing this "regulatory race to the bottom" will require unprecedented levels of international coordination and harmonization.

The Future of Ai Governance

Despite the challenges, the ongoing efforts to establish robust AI regulations are crucial for safeguarding the future of humanity. As AI systems become more pervasive and influential, the need to enshrine ethical principles and ensure accountable, transparent development has never been greater.

The ultimate goal of AI governance is to unleash the transformative potential of artificial intelligence while mitigating its most pernicious risks. By striking the right balance between innovation and responsibility, policymakers can pave the way for a future where AI becomes a powerful tool in service of human flourishing.

Comments