Ai Bias And Ethics

The untold story of ai bias and ethics — tracing the threads that connect it to everything else.

At a Glance

- Subject: Ai Bias And Ethics

- Category: Technology & Society

- Published: 2023

- Author: Jane Mercer

- Location: Global

The Hidden Algorithms Shaping Our Reality

It’s a startling truth: the AI systems embedded in our daily lives are riddled with biases that mirror — and often amplify — our society’s deepest prejudices. From facial recognition that struggles to identify darker-skinned individuals to loan approval algorithms that unfairly favor certain demographics, AI bias isn’t just a glitch; it’s a mirror reflecting human prejudice at its worst.

But what’s truly astonishing is how these biases often go unnoticed — hidden behind complex code and opaque decision-making processes. In 2019, a Harvard study revealed that some popular AI-powered hiring tools systematically downgraded female candidates, simply because their resumes contained words like "lead" or "manage," which historically skewed male. The question is: how did these biases seep in, and more importantly, how do we stop them?

The Ethics of Creating Unbiased Machines

At the heart of AI bias lies a contentious debate: can we ever truly create a machine free of human flaws? Engineers, ethicists, and activists grapple with this question daily. Ethical AI development demands transparency, accountability, and a commitment to fairness — yet the road is littered with moral minefields.

Consider the infamous case of COMPAS, an AI tool used in American courts to assess defendant risk. In 2016, a ProPublica investigation revealed that COMPAS was biased against African Americans, falsely labeling them as high risk nearly twice as often as white defendants. The dilemma was stark: should the developers have foreseen this bias? Or was it an unavoidable consequence of using historical criminal data?

The Unintended Consequences of AI Bias

Bias in AI doesn’t just perpetuate inequality — it can escalate it. Take the case of facial recognition used by law enforcement. Studies have shown that when deployed without safeguards, these systems can lead to wrongful arrests and violate civil liberties. In 2020, the London Metropolitan Police faced intense criticism after deploying a facial recognition system that misidentified numerous innocent citizens, predominantly people of color.

In finance, biased algorithms have led to discriminatory lending practices, effectively locking out entire communities from opportunities to build wealth. The ripple effects are profound: communities grow more divided, trust in institutions erodes, and social cohesion suffers.

And yet, the worst part? These consequences often unfold in silence, masked by the veneer of technological progress.

"When machines inherit our biases, they don’t just reflect our flaws — they magnify them,"says Dr. Samuel Lee, a leading AI ethicist at the University of California.

Breaking the Cycle: Ethical Frameworks and Regulation

The fight against AI bias has spurred a wave of regulations and ethical guidelines. The European Union’s proposed AI Act aims to classify AI applications by risk level, banning dangerous uses outright. Meanwhile, organizations like the Partnership on AI advocate for inclusive design processes that involve diverse voices, ensuring that AI systems serve everyone equally.

One revolutionary approach is "algorithmic auditing" — the process of systematically analyzing AI outputs for bias and correcting course. Companies like Google and Microsoft now employ dedicated teams to scrutinize their models, but critics argue this is not enough without external oversight and enforceable standards.

In 2022, the World Economic Forum launched an initiative called "AI for Good," which aims to develop global standards for ethical AI deployment. These efforts mark a turning point, but the path forward remains murky and fraught with challenges.

The Future of Ethical AI: Utopian Dreams or Dystopian Nightmares?

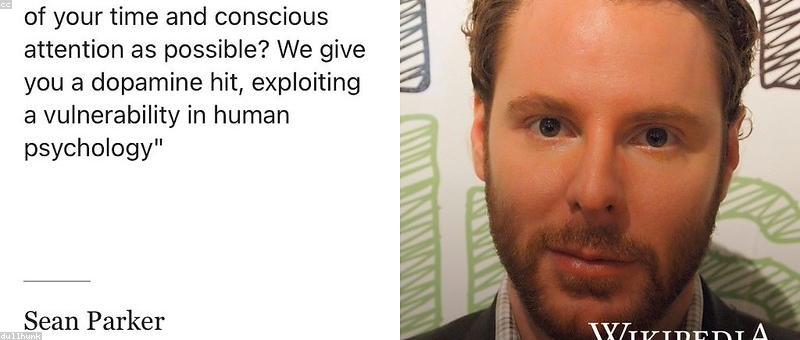

As AI continues to evolve at a breakneck pace, the question isn’t just about fixing bias — it's about redefining our relationship with technology itself. Will future AI systems learn empathy, fairness, and morality? Or will they become instruments of manipulation, wielded by those with malicious intent?

Imagine a world where AI assistants are programmed with moral reasoning, capable of understanding human suffering and making decisions that prioritize well-being. That’s the promise of "value-aligned AI," a burgeoning field led by visionaries like Dr. Elena Morozova. But skeptics warn that embedding ethics into machines may never escape the realm of philosophy — leaving us to wrestle with questions of consciousness and moral agency.

And then there are the dark predictions: AI systems so biased they manipulate elections, deepen societal divides, or even trigger conflicts. The line between Utopia and Dystopia hinges on how we choose to shape these digital entities today.

In the end, the fight against AI bias and the quest for ethical AI is perhaps the greatest moral challenge of our era — one that demands transparency, collaboration, and an unflinching commitment to justice.

Comments